I have two main hobbies at the moment - I'm one of the top currently active shaderhackers that make video games work in stereo 3D and one of the developers on 3DMigoto to make this possible, and I am also into photography. I sometimes combine both of these hobbies as well, in the form of stereo photography and was recently asked about this subject.

Stereo photography can become a rather tricky subject due to some (unsolvable) technical issues I'll touch on a little below, but it can be quite fun nevertheless.

Camera

The camera I mostly use for this is a Fujifilm FinePix Real 3D W3:

https://en.wikipedia.org/wiki/Fujifilm_FinePix_Real_3D

It includes two lenses separated by some distance similar to human eyes (it's actually a little wider than my eyes) and takes two photos of the same subject simultaneously from different perspectives (it has other 3D modes as well, but nothing that couldn't be done with a regular camera). It also has a glasses-free 3D display built into the camera ("sweet spot" based, meaning you have to look at it straight on), which allows you to see in advance how the 3D photos will look, and is handy to show subjects themselves in 3D, which they always like.

It is also possible to take stereo photographs with any camera by taking two photos from slightly different perspectives, but this can be difficult to get the orientation right between the two, and if the subject moves (or wind blows a leaf, etc) it means the photos will not quite match up between both eyes. There are various rigs available to remove some of the error from this process.

It is also possible to use two individual (preferably identical) cameras simultaneously if their settings (focal point, focal length, f-stop) are identical and their shutters are synchronised. At some point, I'd really like to try this set up using two DSLRs with "tilt-shift" lenses rather than ordinary lenses as my experience working with stereo projections in computer graphics leads me to believe that could result in a superior stereo photograph if setup correctly with a known display size, but trying that would be somewhat expensive and I have never heard of anyone else doing it.

Viewing Options

There are a number of options available to view a stereo photograph, each with their advantages and disadvantages: computer monitors, TVs or projectors using either active shutter glasses or passive polarised glasses, anaglyph (red-cyan) glasses with any display, displays / photographs with a lenticular lens array over the top for glasses-free 3D viewing, or just simply using the cross-eyed or distance viewing techniques to see a 3D photo with no special display, or by using the mirror technique.

I personally have a laptop with a 3D display (no longer being manufactured), and a 3D DLP projector (BenQ W1070).

3D computer monitors usually use nvidia 3D Vision and are 120Hz (or higher) active displays and the V2 ones feature a low-persistence backlight (turns off while the glasses change eyes to reduce crosstalk and increase the perceived brightness). These use nvidia's proprietary active shutter glasses, which are 60Hz per eye. These types of displays are a pretty good choice, but do suffer from some degree of crosstalk, and depend on nvidia's proprietary drivers (also, for Linux I believe that a Quadro card may be required from the documentation, though I have seen reports that it might be possible to make it work with a GeForce card like we do in Windows).

3D televisions have several different 3D formats they may use. side-by-side is usually the easiest option (though not necessarily the best as it halves the horizontal resolution) and is supported by geeqie and mplayer. 3D televisions are a poor choice for stereo content as they tend to suffer from exceptionally bad crosstalk thanks to the long time it takes the pixels to change (that is, each eye can see part of the image intended for the other eye), and they tend to have pretty high latency (fine for photos, not good for gaming), but have the advantage that they are fairly common and you may already have one. Which glasses they use and whether they are active or passive will depend on the specific TV. I believe that some use DLP glasses, which are standard.

For 3D projectors we only really consider 3D DLP projectors. These are similar to 3D TVs, but they are generally a much better choice - they have zero crosstalk thanks to the speed at which the DPL mirrors are able to switch (much faster than even the best LCD) and when used for gaming are generally much lower latency than TVs. Their disadvantages are the space required (short throw versions are available for smaller rooms), need to keep the room dark (or use a rather expensive black projector screen), and replace the bulb every now and then. The active DPL glasses they use are a standard so you are not forced to use the projector's brand glasses, though beware that the projector probably won't come with any and they will need to be purchased separately. The IR signal used to synchronise the glasses is emitted from the projector and simply bounced off the projector screen.

Given the typical screen size of a projector, these have the highest risk of violating infinity for pre-rendered content (displaying an object further apart than your eyes) and photos may require a parallax adjustment to offset their left and right images to be able to comfortably view. Movies already are calibrated for a larger screen (IMAX), so no need to worry there (but 3D movies also generally suck as a result of this), and games can calibrate to whatever screen size they are being used with for the best result.

Anaglyph glasses are a low-cost option ($2 from ebay) that can be used with any display, but I would not recommend this for anything other than trying out 3D since the false colours and high crosstalk result in eye-strain. I cannot tolerate anaglyph for more than a few minutes, whereas I can comfortably wear active shutter glasses all day with 3D games. In Linux, geeqie and mplayer can both output stereo content in several forms of anaglyph (compromising between more realistic colours and less crosstalk between the eyes).

Displays with a lenticular lens array do not require glasses to view - the Fujifilm camera I use has one of these on the back. They usually require the viewer to have their head in a specific position ("sweet spot") however, though there are some that use eye-tracking to compensate for this in real time and can support a very small number of viewers anywhere in the room (I'm not sure if any of those are consumer grade yet though).

Fujifilm also produces a 3D photo frame that is aimed at users of their camera with the same sort of lenticular lens array over it. I have yet to purchase this as I have my doubts as to it's general usefulness since the fact that it still has a sweet spot means the viewer must stand in a specific spot and cannot enjoy the photos from anywhere in the room.

It is also possible to print out a photo with the left and right views interlaced and place a lenticular lens array on the photo itself, allowing for 3D prints. Fujifilm has a service to do this, but it is not available in Australia and I have yet to track down an alternative print service available here. Apparently it is possible to purchase the supplies to do this yourself.

The cross-eyed and distance viewing methods do not require any special displays as they are simply a technique you can use to view a pair of stereo images placed side-by-side. The images must be fairly close together and should not be more than about 7cm or so wide, perhaps even less. The further apart the images are on the screen, the harder these techniques are to achieve. These will not give you the full impact as using glasses with a full 3D display, but they don't cost anything and with a bit of practice can become easy.

This is an example of a photo I took with the left and right reversed for cross-eyed viewing. The trick is to go cross-eyed until the two images merge into one. To help practice this technique, hold your finger up half way between your face and the display and look at your finger instead of the display. Focus on your finger and slowly move it forwards or backwards until the images on the display behind it have merged together, then try to refocus your eyes on the 3D image without pointing them back at the screen. It may take a few attempts while you get used to the technique.

This image is set up for the distance viewing method. For this method you need to relax your eyes and allow them to defocus from the screen and look behind the display until the images merge, then try to refocus on the image without looking back at the screen.

The mirror technique works by placing a mirror in front of your nose (in this case facing to the left) so you can see a reflection of the image in the mirror. Focus on the image in the mirror and it should pop into 3D. This can be easier than the above techniques since it does not require your eyes to be looking in a different direction to their focus, and can comfortably be used to view larger stereo images, though it can be difficult to fit the entire image in the mirror (you may have to move your head back or forwards). Also, since most mirrors are imperfect (especially at this angle) they may show a double image (click for a larger version which may be easier to use this technique):

Subjects

I've found that there are certain subjects that work well in stereo that don't work at all in 2D, yet just as many that work better in 2D than 3D. If you ever see a scene that looks really interesting to your eyes, but plain and uninteresting on a 2D photo when the depth has been lost (or replaced with a depth of field blur) it might just be a candidate to try in stereo - here's a good example of this:

Crosseyed

Distance

Mirror Left

Mirror Right

Anaglyph

In 2D all the rocks blend together and it becomes a plan and uninteresting shot, but in 3D the individual rocky outcroppings can easily be distinguished from one another and the shot is interesting. Here's another example:

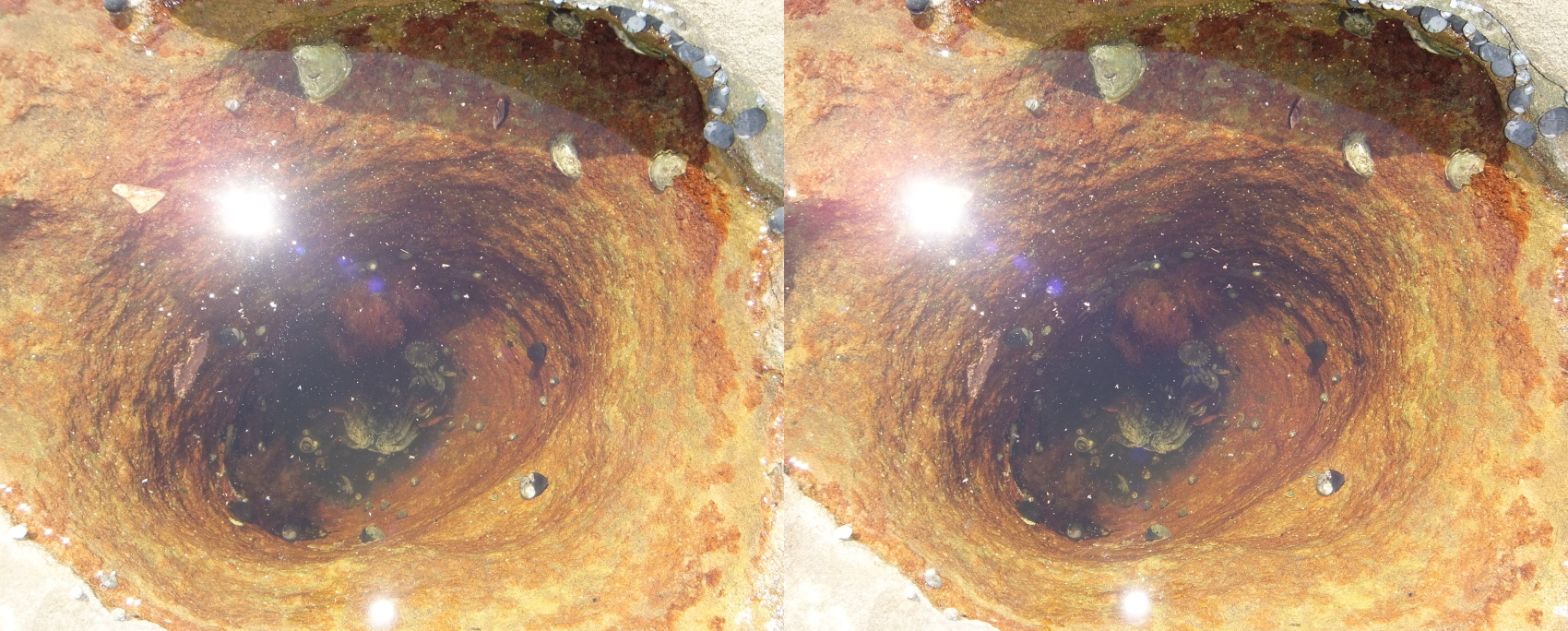

Crosseyed

Distance

Mirror Left

Mirror Right

Anaglyph

In 2D there is nothing interesting about this shot and I would delete it, but in 3D the depth of the hole is apparent and the shot is interesting (still not really a keeper, just interesting to show the 3D).

If the subject will not gain much from 3D, it may be better shot with the additional control that a DSLR provides in 2D and without the technical problems that stereo photography brings. 3D tends to work better for closer subjects rather than those further away, and when the subject links multiple depths together.

If the subjects are too far away or too far apart they may appear as layered 2D images, which can be ok, but does not really do stereo photography justice. Zooming in on a distant subject with the camera will not provide the same stereo effect as moving closer to it (the same thing happens in 2D - you might be familiar with the dolly zoom effect, but in 3D it is far more pronounced).

For instance, this photo did not gain much from being shot in stereo as everything is just too far away and the effect is not very pronounced (displaying this on a larger screen may help a little):

Crosseyed

Distance

Mirror Left

Mirror Right

Anaglyph

Stereo photography can work especially well to show detail that is lost in a 2D image - most photographers will see running water and immediately set their camera to use a longer exposure time to get that classic artistic streaking effect, but in 3D you might do the opposite and try to freeze the water in the frame so you can examine it's structure in detail (I have better examples, but not that I can post here):

Crosseyed

Distance

Mirror Left

Mirror Right

Anaglyph

In video games, playing in stereo brings out a lot of detail that players would usually ignore - grass, leaves and rocks are no longer just there to "not look weird because they are missing" - they now have real detail and players will stop and admire just how much effort the 3D artist put into them (or in some cases how little). The same works in a stereo photo - if I were taking these in 2D I would probably have focused on an individual flower or leaf and used depth of field to emphasise it, but in 3D the wider scene is interesting as the detail on every single flower, leaf and blade of grass is apparent (if possible, best viewed on a larger screen to see the detail more clearly):

Crosseyed

Distance

Mirror Left

Mirror Right

Anaglyph

Crosseyed

Distance

Mirror Left

Mirror Right

Anaglyph

Crosseyed

Distance

Mirror Left

Mirror Right

Anaglyph

Issues

The Fujifilm camera should only be used in landscape orientation when both lenses are used since the lenses must be aligned horizontally - otherwise the images will be misaligned between the eyes and will cause eye-strain and will not be pleasant to view in stereo (if possible at all). This can be corrected in post to some extent, but only to a point - if the photo was a full 90 degrees out it will not be possible to correct (you could still salvage either of the two images as a 2D photo).

That's not to say that portraits can't be taken in stereo, but the lenses have to be aligned horizontally, whether that means using a different rig, or taking a wider angle landscape shot and cropping it to portrait.

A stereo camera sees the world in much the same way our eyes do:

\ \ / /

\ \ / /

\ \/ /

\ /\ /

\/ \/

But the problem is that is not beamed directly into our eyes, but rather has to be displayed on an intermediate display and we don't quite see that display the same way. There's not much that can be done about this in photography or fimography, which is one of several reasons that 3D movies are usually not considered to be very good. I do think that a pair of tilt-shift lenses could help here, but even that would not help with the fact that we do not know ahead of time what size display will be used to view the image later.

The reason the display size is important, is that if the left and right images of an object is displayed on the screen further apart than the viewer's eyes (regardless of how far away the display is), the object will appear to be beyond infinity, which quickly becomes uncomfortable or impossible to view. The only way to combat this is to shift the offset of the two images until nothing is more than 7cm apart on the largest display it might ever be displayed on. Displaying the content on a smaller screen will quickly diminish the strength of the stereo effect - therefore, the IMAX theatre in Sydney is another reason that 3D movies are considered poor as their 3D effect is reduced on anything other than the IMAX theatre in Sydney, and by the time you are viewing it in a home theatre there is almost no 3D left.

But video games do not suffer this same problem - they are rendered live and know the size of the display they are being rendered on, and can use this information to skew the projection so the viewing frustrum for each eye will touch the edge of the screen at the point of convergence, plus they can dial the overall strength of the 3D effect and the point of convergence up and down as desired:

\- \ / -/

\- \ / -/

\- \ / -/

\- \ screen of / -/

\-\ known size /-/

\-----------------/ <-- point of convergence

\\- -//

\ \- -/ /

\ \- -/ /

\ \-/ /

\ -/ \- /

o o

3D screenshots of games are still a problem however - if they are scaled up to a larger display they may violate infinity, and if they are scaled down to a smaller display they will have a reduced 3D effect. Now that you know this, here are some screenshots I have taken in various games that are calibrated to a 17" display for a comparison of how they look compared to the photos:

http://photos.3dvisionlive.com/DarkStarSword/

Without the nvidia plugin that site is pretty useless, but I made a user script to add a download button to it to get at the raw side-by-side images that can be saved as .jps files and opened with a stereo photo viewer such as geeqie (Linux), sView (Windows, Linux, Mac, Android) or nvidia photo viewer (Windows):

https://github.com/DarkStarSword/3d-fixes/raw/master/3dvisionlive_download_button.user.js